Hi, I’m currently making my own custom render pipeline and want to make one of the aux outputs for offscreen buffer be a lighting buffer that is writing to with integers.

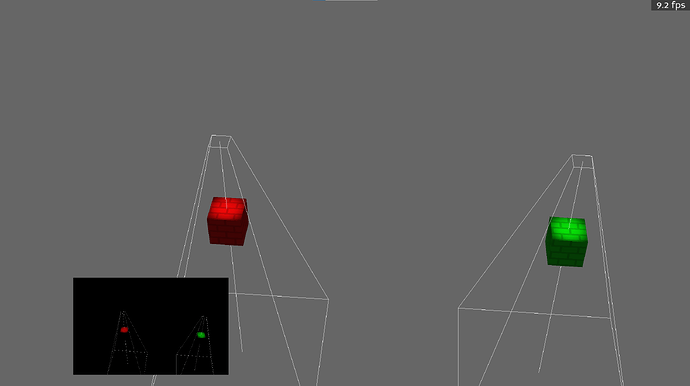

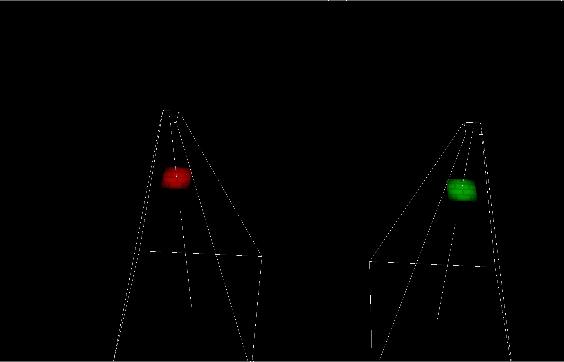

as seen here

Lighting float based buffer (you can see the light’s debugging frustum, ignore that):

Only places that have received lighting will show up on the buffer, everything else that does not receive lighting will be black vec4(0, 0, 0, 0). Currently, I am using float textures to support this, but however when I switch to writing color values as integers, the texture is straight black (which I interpreted as the values not being written to the buffer).

Here is the basic code I am using to write and output float values:

Python

lighting_texture = p3d.Texture('lightingtexture')

win_props = WindowProperties.size(512, 512)

fb_props = FrameBufferProperties()

fb_props.set_rgb_color(True)

fb_props.set_depth_bits(24)

fb_props.setAuxRgba(3)

buffer = base.graphicsEngine.make_output(

application.base.pipe, "offscreen buffer", -2,

fb_props, win_props,

GraphicsPipe.BF_refuse_window,

application.base.win.get_gsg(), application.base.win

)

buffer.add_render_texture(tex, GraphicsOutput.RTMBindOrCopy, GraphicsOutput.RTPAuxRgba2)

buffer.setClearActive(GraphicsOutput.RTPAuxRgba2, 1)

buffer.setClearValue(GraphicsOutput.RTPAuxRgba2, (1.0, 1.0, 1.0, 0.0))

buffer_cam = application.base.make_camera(buffer)

buffer_cam.reparent_to(camera) # camera is a pointer to base.cam

Basic Shader Code

out vec4 o_aux2; //lighting aux

...

o_aux2 = vec4(light_color.r, light_color.g, light_color.b, 1.0); //puts light color in

This code works well. But I do want raw integers. I am trying to avoid doing float conversion from float to integer due to precisions issues. The plan is to store the light index from “p3d_LightSourceParameters” in the alpha channel where I can later deduce in post-process shaders the position and direction of the light.

My integer code is as follows:

Python

lighting_texture = p3d.Texture('lighting texture')

lighting_texture.setup2dTexture(512, 512, p3d.Texture.T_unsigned_int, p3d.Texture.F_rgba8)

win_props = WindowProperties.size(512, 512)

fb_props = FrameBufferProperties()

fb_props.set_rgb_color(True)

fb_props.set_depth_bits(24)

fb_props.setAuxRgba(3)

buffer = base.graphicsEngine.make_output(

application.base.pipe, "offscreen buffer", -2,

fb_props, win_props,

GraphicsPipe.BF_refuse_window,

application.base.win.get_gsg(), application.base.win

)

buffer.add_render_texture(tex, GraphicsOutput.RTMBindOrCopy, GraphicsOutput.RTPAuxRgba2)

buffer.setClearActive(GraphicsOutput.RTPAuxRgba2, 1)

buffer.setClearValue(GraphicsOutput.RTPAuxRgba2, (1.0, 1.0, 1.0, 0.0))

buffer_cam = application.base.make_camera(buffer)

buffer_cam.reparent_to(camera) # camera is a pointer to base.cam

Basic Shade Code:

out uvec4 o_aux2; //lighting aux

...

o_aux2 = uvec4(255, 0, 0, 255); //using red for testing

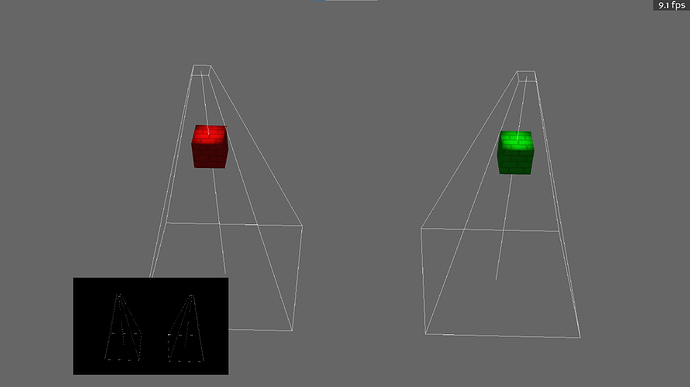

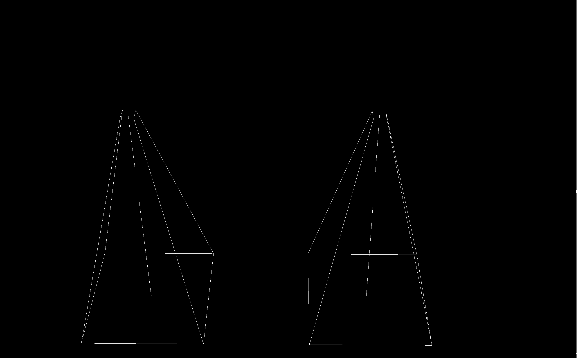

Integer Results:

completely bare texture

The texture should be mostly red as seen in the shader code, but the texture still returns a black color (but the lights frustum lines are still visible). I have tried all kinds of texture formats (signed ints, unsigned ints, unsigned bytes, rgba8i, rgba32) but none fixed the problem.

Could this be something to do with initiating the offscreen buffer?