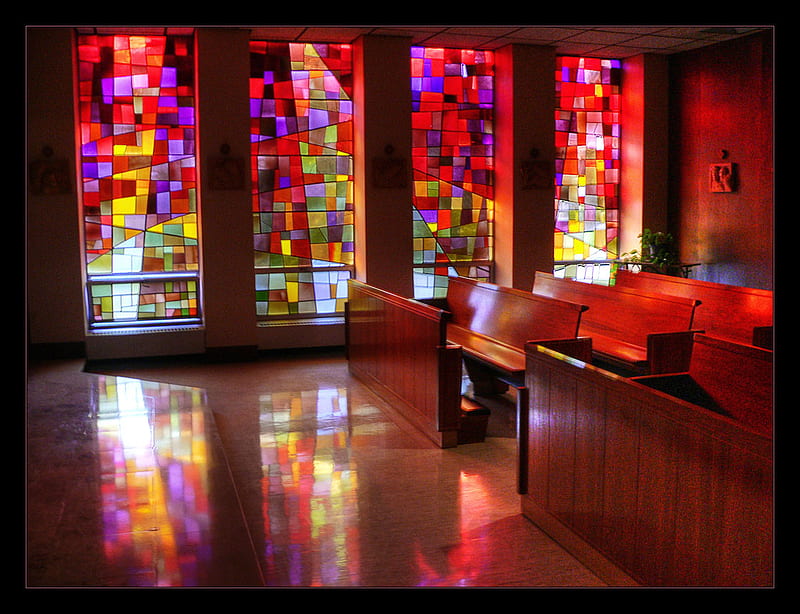

In the real world, light changes colour as it passes through a translucent object. Later, falling on some other object, it “colors” it. An example would be a stained glass window:

I am trying to get a similar effect in Panda3D. Unfortunately, without success.

Below is a minimalist code example:

from direct.showbase.ShowBase import ShowBase

from panda3d.core import TransparencyAttrib, PointLight

base = ShowBase()

foreground = base.loader.loadModel("teapot")

foreground.reparentTo(base.render)

foreground.setPos(0, 5, 0)

foreground.setTransparency(TransparencyAttrib.M_alpha)

foreground.setColorScale(1, 0, 0, .5)

foreground.setScale(.25)

background = base.loader.loadModel("teapot")

background.reparentTo(base.render)

background.setPos(0, 10, -2)

light = PointLight('light')

light = base.render.attachNewNode(light)

light.setPos(0, -1, 1.25)

base.render.setLight(light)

light.node().setShadowCaster(True, 8192, 8192)

base.render.setShaderAuto()

base.run()

The code places two teapots in the scene. The distant (larger) one, in the original colour, serves as a background. The closer (smaller) one has been modified by me. I scaled it down four times and changed the colours, leaving only the red (R) channel and half the transparency/alpha (A) channel. In addition, at the back of the camera (behind the observer’s back), and a bit above, I placed a light source that not only illuminates the scene, but also casts shadows.

In the real world, it seems to me that such a set should result in:

- The teapot in the background, as we observe it through the foreground teapot, will be partially visible, albeit stained red.

- On the background of the teapot, there should be a shadow of translucent, reddish light, which is the effect of passing through the teapot in the foreground.

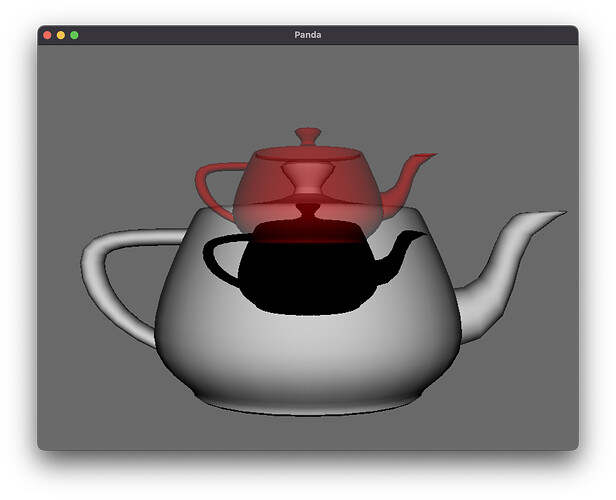

And here is the actual effect obtained in Panda3D:

As you can see, while the first effect was rendered correctly, the second one - not at all. Instead of a reddish light, we see a completely black shadow that completely ignores the translucency of the red teapot.

Anyone have a solution to this problem? How to get the effect I expect?