I am reading movie files in higher depth than the typical 8bit per pixel. I have two pipes of doing it, one through my own external thread with oiio GitHub - AcademySoftwareFoundation/OpenImageIO: Reading, writing, and processing images in a wide variety of file formats, using a format-agnostic API, aimed at VFX applications.

And also with the MovieTexture from Panda3d which goes through ffmpeg as we all know.

I really like the efficiency of the internal Panda3d MovieTexture. It handles pretty well seeking and overall loading and management. The problem I have is that it only provides data in 8bits per pixel/24bits images/data to the texture.

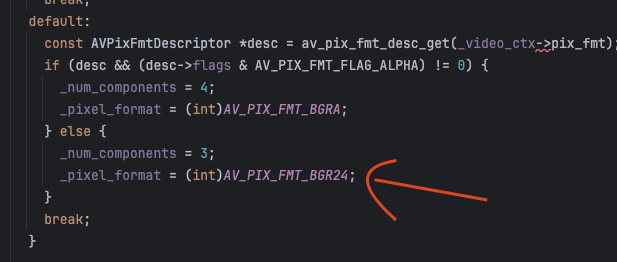

Digging in the Panda code, I found the convertion that is done in line 131 of ffmpegVideoCursor.cxx

So my instinct was to change that to

_pixel_format = (int)AV_PIX_FMT_BGR48LE;

from the ffmpeg docs. And then changing the texture to the different depth.

It “kind” of works. I avoid color banding but seems that the mapping of my data is wrong. It is not a shader s/t values, but is something in the data is read when copyied from memory.

I tried multiple ComponentType and Format in the texture, and the best combo was:

movieTexture.set_component_type(Texture.T_unsigned_short)

movieTexture.set_format(Texture.F_rgb16)

Which give me correct color and image depth, but the image is cropped and duplicated. Here the snapshot of the texture in a polygon 1920x1080

While the same video with

_pixel_format = (int)AV_PIX_FMT_BGR24;

and

movieTexture.set_component_type(Texture.T_unsigned_byte)

movieTexture.set_format(Texture.F_rgb)

Looks correct (But with some posterization because its rendered in 8bpp)

Wondering if anyone has any idea what I am doing wrong or what is the right combo/management of the texture/data process from ffmpeg all the way to the texture to make it work right.

Thanks so much in advance!