Thanks for your reply.

I followed your instructions and added graphics_engine->render_frame() finally it works

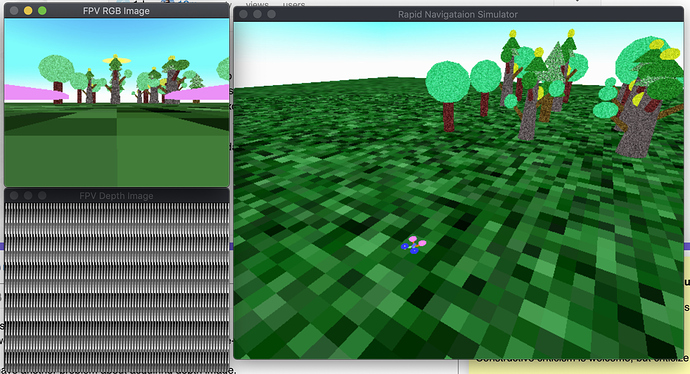

But now I have another problem about acquiring depth image. A similar topic was discussed and I followed its codes but unfortunately it didn’t work for me. As you see above, the depth image is incorrect. Something wrong also displayed:

Known pipe types:

CocoaGraphicsPipe

(all display modules loaded.)

:display:gsg:glgsg(error): GL error 0x502 : invalid operation

:display:gsg:glgsg(error): An OpenGL error has occurred. Set gl-check-errors #t in your PRC file to display more information.

:display:gsg:glgsg(error): GL error 0x502 : invalid operation

:display:gsg:glgsg(error): An OpenGL error has occurred. Set gl-check-errors #t in your PRC file to display more information.

This is my codes:

void Scene::setup_depth_camera() {

WindowProperties _wp;

panda_framework->get_default_window_props(_wp);

_wp.set_title(_name);

_wp.set_size(320, 240);

FrameBufferProperties _fbp;

_fbp.set_rgb_color(true);

_fbp.set_alpha_bits(1);

_fbp.set_depth_bits(1);

auto _gpo = window_framework->get_graphics_output();

auto _buffer_depth = panda_framework->get_graphics_engine()->make_output(panda_framework->get_default_pipe(), _name,

-2, _fbp, _wp, GraphicsPipe::BF_refuse_window, _gpo->get_gsg(), _gpo);

auto _texture_depth = new Texture();

_buffer_depth->add_render_texture(_texture_depth, GraphicsOutput::RTM_copy_ram, GraphicsOutput::RTP_depth_stencil);

_texture_depth->set_minfilter(Texture::FilterType::FT_shadow);

_texture_depth->set_magfilter(Texture::FilterType::FT_shadow);

auto _camera_depth = new Camera(_name + "_Depth");

auto _lens_d = _camera_depth->get_lens();

_lens_d->set_fov(90, 60);

//_lens_d->set_focal_length(0.028);

_lens_d->set_film_size(0.032, 0.024);

_lens_d->set_near(0.05);

_lens_d->set_far(100);

auto _camera_depth_np = window_framework->get_render().attach_new_node(_camera_depth);

_camera_depth_np.set_pos(0.05, 0.0, 0.1);

_camera_depth_np.set_hpr(-90, 0, 0);

auto _region_depth = _buffer_depth->make_display_region();

_region_depth->set_camera(_camera_depth_np);

_camera_depth_np.reparent_to(window_framework->get_render());

auto shader = Shader::load(Shader::SL_GLSL, "glsl-simple.vert", "glsl-simple.frag");

_camera_depth_np.set_shader(shader);

}

void Scene::get_depth_image(cv::Mat &image) {

if(_texture_depth->might_have_ram_image()) {

CPTA_uchar _pic = _texture_depth->get_ram_image();

void *_ptr = (void *) _pic.p();

image = cv::Mat(_texture->get_y_size(), _texture->get_x_size(), CV_8UC(_texture->get_num_components()), _ptr,

cv::Mat::AUTO_STEP);

cv::flip(image, image, 0);

}

}