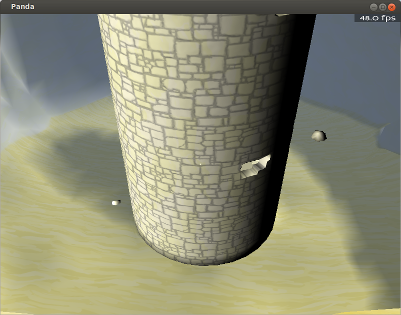

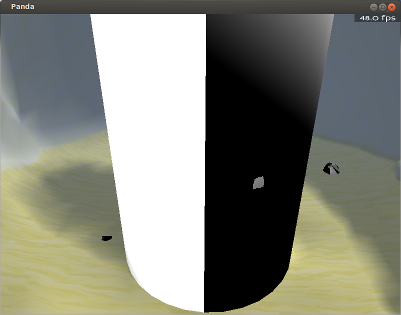

I’m currently attempting to implement a normal mapping shader. Based on what I’ve read, my shader looks as though it should do the job–but it doesn’t, and I’m really not seeing where I’m going wrong.

The shader in this post is reported by the poster to work, and has been one of the references that I’ve used.

Below is what I have thus far; I’m sorry to dump so much code, but I’m really not sure of where I’m going wrong…

It might be worth noting that my normal map is intended to be tiled, as it corresponds to a tiled diffuse map (specifically, stone wall-blocks). The diffuse map seems to tile correctly, so I would expect the normal map to do so as well. The model does seem to have tangents and binormals, as exported via YABEE.

Actual code:

(There are a few bits in here that aren’t used by the normal-mapping code, but they shouldn’t take up much space.)

Main shader:

void vshader(

in float4 vtx_texcoord0: TEXCOORD0,

in float4 vtx_position: POSITION,

in float3 vtx_normal: NORMAL,

in float3 vtx_tangent0,

in float3 vtx_binormal0,

in float4 vtx_color: COLOR,

in uniform float4x4 mat_modelproj,

in uniform float4x4 mstrans_world,

out float4 l_texcoord0: TEXCOORD0,

out float4 l_position: POSITION,

out float4 l_color: COLOR,

out float3 l_normal,

out float3 l_tangent0,

out float3 l_binormal0,

out float4 l_screenVtxPos

)

{

l_position = mul(mat_modelproj, vtx_position);

l_texcoord0 = vtx_texcoord0;

l_normal = vtx_normal.xyz;

l_tangent0 = vtx_tangent0;

l_binormal0 = -vtx_binormal0;

l_screenVtxPos = mul(mat_modelproj, vtx_position);

l_color = vtx_color;

}

void fshader(

uniform sampler2D tex_0,

in float4 l_texcoord0: TEXCOORD0,

in float3 l_normal,

in float3 l_tangent0,

in float3 l_binormal0,

in float4 l_screenVtxPos,

in float4 l_color: COLOR,

in uniform float4x4 mstrans_world,

in uniform sampler2D tex_normal,

out float4 o_color: COLOR0

)

{

float dist = abs(l_screenVtxPos.z);

o_color = tex2D(tex_0, l_texcoord0.xy);

float3 normal = normalMap(l_normal, l_tangent0, l_binormal0, tex_normal, l_texcoord0);

// This lighting is temporary; my main lighting shader is a little more

// complex, and I wanted to remove that complexity while

// attempting to fix the normal-mapping.

float4 lightDir4 = float4(-1, -1, 1, 0);

float3 lightDir = normalize((mul(mstrans_world, lightDir4)).xyz);

o_color = o_color*dot(normal, lightDir);

}The normal-mapping function:

float3 normalMap(

in float3 l_normal,

in float3 l_tangent0,

in float3 l_binormal0,

in uniform sampler2D tex_normal,

in float4 l_texcoord0)

{

float4 normalOffsetVec = tex2D(tex_normal, l_texcoord0.xy)*2.0 - 1.0;

float3 right = l_binormal0*normalOffsetVec.x;

float3 up = l_tangent0*normalOffsetVec.y;

float3 norm = l_normal*normalOffsetVec.z;

float3 result = normalize(up + right + norm);

return result;

}Use of the shader in Python script:

# The shader is only intended to be applied to a part

# the model; another shader has by this point already

# been applied to a NodePath above this, with the

# intention being that this shader replaces that.

tower = geometry.find("**/Cylinder.001")

if not tower.isEmpty():

print "found tower"

tower.setShader( loader.loadShader("Adventuring/lightingWithNormalShader.cg"))

tower.setShaderInput("tex_normal", loader.loadTexture(LEVEL_TEX_FILES + "wall_normal.png"))Does anyone see where I’m going wrong?