Hey!

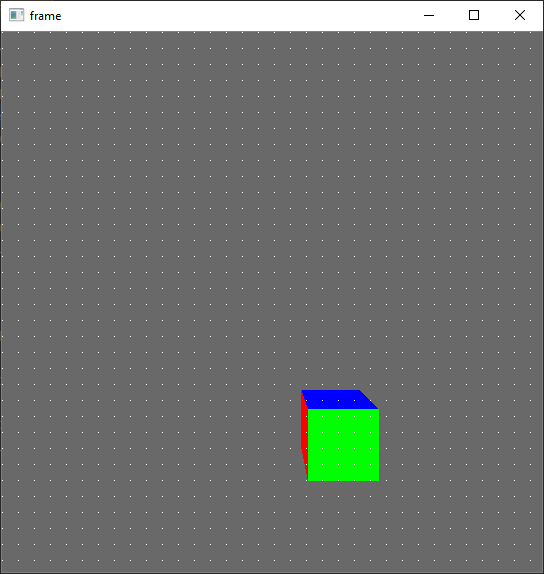

i’m trying to use CollisionRays to scan an offline rendered image for objects (will later be used to check object visibility). The idea for my test program is to draw white or black pixels at positions where an object is found (black, not working yet) or not found (white):

I used a working mouse object picker example to create my program, but can’t figure out why only “None” objects are found for each picker ray. i guess there is a difference when using the offline render buffer. Are there any parameters to set to make this work? Or does anyone have an idea what the problem could be?

i found also a different way to check the object visibility by using colors as object ids, but it’s to slow for my purpose because i only need a few rays per image.

this is the current state of the code, which is actually supposed to color the pixels on the object black and not white:

import cv2 as cv

import numpy as np

from direct.showbase.ShowBase import ShowBase

from panda3d.core import FrameBufferProperties, WindowProperties, GraphicsPipe, GraphicsOutput, CollisionNode, GeomNode, CollisionRay, CollisionHandlerQueue, CollisionTraverser, LensNode, Camera

from panda3d.core import Texture, PerspectiveLens

class Simulator(ShowBase):

def __init__(self, width=540, height=540, fov=80):

ShowBase.__init__(self, fStartDirect=True, windowType='offscreen')

self.width, self.height = width, height

window_props = WindowProperties.size(width, height)

frame_buffer_props = FrameBufferProperties()

frame_buffer_props.rgb_color = 1

frame_buffer_props.color_bits = 24

frame_buffer_props.depth_bits = 24

self.buffer = self.graphicsEngine.make_output(self.pipe, f'Image Buffer', -2, frame_buffer_props, window_props, GraphicsPipe.BFRefuseWindow, self.win.getGsg(), self.win)

self.buffer.addRenderTexture(Texture(), GraphicsOutput.RTMCopyRam)

lens = PerspectiveLens()

lens.set_film_size((width, height))

lens.set_fov(fov)

lens.set_near_far(0.1, 1000)

self.camera1 = self.makeCamera(self.buffer, lens=lens, camName=f'Image Camera')

self.camera1.reparentTo(self.render)

self.attach_picker(self.camera1)

def attach_picker(self, camera):

pickerNode = CollisionNode('ray')

pickerNP = camera.attachNewNode(pickerNode)

pickerNode.setFromCollideMask(GeomNode.getDefaultCollideMask())

self.pickerRay = CollisionRay()

pickerNode.addSolid(self.pickerRay)

pickerNP.show()

self.rayQueue = CollisionHandlerQueue()

self.cTrav = CollisionTraverser()

self.cTrav.addCollider(pickerNP, self.rayQueue)

def render_image(self):

self.graphics_engine.render_frame()

tex = self.buffer.getTexture()

data = tex.getRamImage()

image = np.frombuffer(data, np.uint8)

image.shape = (tex.getYSize(), tex.getXSize(), tex.getNumComponents())

return np.flipud(image)

def get_object_id(self, x, y):

x = x / (self.width // 2) - 1 # convert coordinate system to [-1, 1]

y = 1 - y / (self.height // 2) # convert coordinate system to [-1, 1]

self.pickerRay.setFromLens(self.camera1.node(), x, y)

if self.rayQueue.getNumEntries() > 0:

self.rayQueue.sortEntries()

entry = self.rayQueue.getEntry(0)

pickedNP = entry.getIntoNodePath()

print("ok")

return pickedNP

return None

if __name__ == '__main__':

width, height = 540, 540

sim = Simulator(width, height)

box = sim.loader.loadModel("models/misc/rgbCube")

box.setPos((1, 5, -2))

box.reparentTo(sim.render)

out = sim.render_image().copy()

# scan some image points for object collisions

for x in range(0, width, 16):

for y in range(0, height, 16):

out[y, x, :] = 255

object = sim.get_object_id(x, y)

if object is not None:

print(object)

out[y, x, :] = 0

cv.imshow("frame", out)

cv.waitKey(0)